AWS EKS – Part 8 – Deploy Worker Nodes using Spot Instances

In this article, we will explain how to deploy worker nodes using Spot Instances to reduce costs, but before that, you must take some considerations into account and follow some best practices to avoid service disruptions. By using Spot instances, you will use the spare AWS capacity at a low cost, “up to 90% discount compared to On-Demand instances”, but you must be aware that AWS can take the capacity back anytime with short notice.

Attention: Improper use of Spot Instances may cause significant downtime!

In the previous articles, we deployed EKS worker nodes using various methods, “fully managed, self-managed, custom launch templates, bottlerocket”, all using ON_DEMAND capacity type; the SPOT capacity type can also be used with all of those methods.

Follow our social media:

https://www.linkedin.com/in/ssbostan

https://www.linkedin.com/company/kubedemy

https://www.youtube.com/@kubedemy

Register for the FREE EKS Tutorial:

If you want to access the course materials, register from the following link:

Register for the FREE AWS EKS Black Belt Course

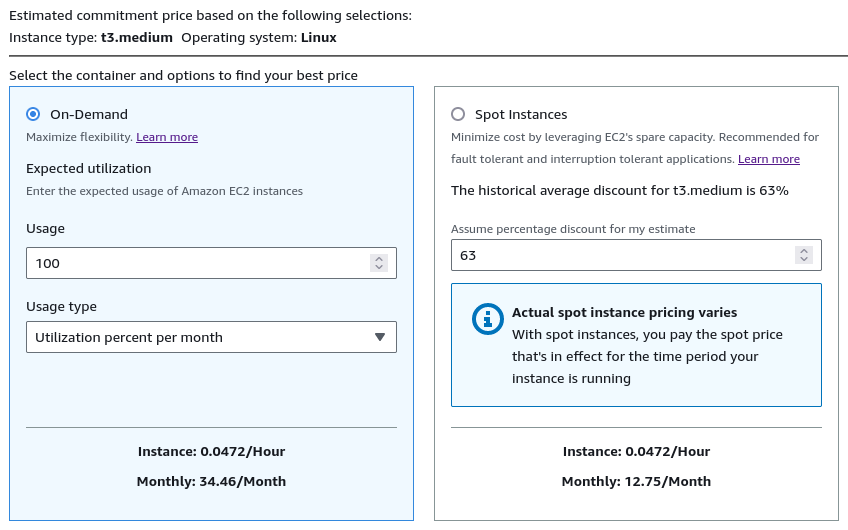

Spot vs. On-Demand pricing comparison:

Although the AWS EC2 Spot instance pricing varies based on availability, region, etc., we can compare it to On-Demand pricing based on the average cost of Spot instance and On-Demand instance for the same instance types in the same region.

For example, the cost of a t3.medium instance in the On-Demand type is $34.46/month but $12.75/month in the Spot type. With this simple calculation, we can find that the Spot instance prices are about a third of the On-Demand prices.

You can also use https://calculator.aws to calculate your estimate.

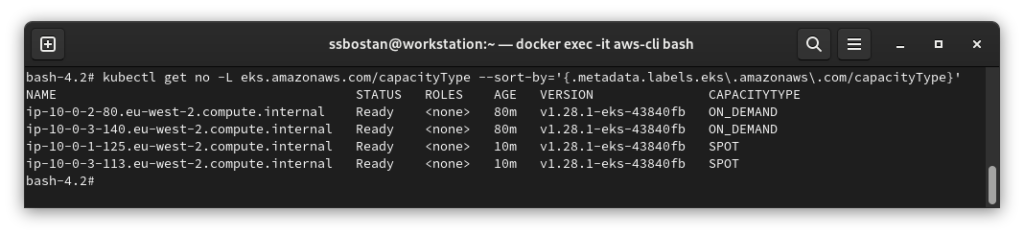

Find EKS worker nodes Capacity type:

AWS EKS adds a special label eks.amazonaws.com/capacityType to every worker node to specify its capacity type. You can list all the available worker nodes and their capacity types by executing the following kubectl command:

kubectl get no -L eks.amazonaws.com/capacityType

How Spot capacity works in the EKS lifecycle:

When we ask AWS to assign some Spot capacity for EKS worker nodes, AWS uses the pools with more spot capacity available. It also turns on the CapacityRebalance option by default to reduce workloads’ downtime by sending rebalance recommendations to deploy new worker nodes before reclaiming the running ones. AWS also guarantees that the spot instance interruption will be done with a two-minute notice to allow the system to deploy new worker nodes before terminating the running ones.

In interruption situations, we may face either of the two following states:

- New nodes become Ready before the interruption notice arrives: in this state, we have no issue; new nodes are available, EKS cordons and drains the old nodes, running Pods will be evicted, and they will be deployed on the new nodes.

- New nodes do not become Ready before the 2-minute notice: in this state, we may face node pressure, workload interruption and downtime till the new nodes become available and ready to accept new workloads.

Spot instance worker nodes deployment procedure:

- Select worker node deployment method.

- Find proper instance types (the more, the merrier).

- Create EKS Spot instance node groups.

- Check EC2 Spot Requests to investigate the procedure.

Step 1 – Select worker node deployment method:

To find the best method that fits your requirements, read the following articles:

In this article, we use fully managed node groups as they can be created quickly, and we can focus on Spot instance considerations, best practices, tips and tricks, etc.

AWS EKS – Part 3 – Deploy worker nodes using managed node groups

AWS EKS – Part 4 – Deploy worker nodes using custom launch templates

AWS EKS – Part 5 – Deploy self-managed worker nodes

AWS EKS – Part 6 – Deploy Bottlerocket worker nodes and update operator

AWS EKS – Part 7 – Deploy ARM-based Kubernetes Worker nodes

Step 2 – Find proper instance types:

In the previous articles, to deploy worker nodes, we used only one instance type; for example, t3.medium One instance type is enough if you’re using the On-Demand capacity type, as AWS assigns that capacity to your account, but in the case of Spot instances, we need more than one instance type. As mentioned earlier, Spot instances use the spare/unused AWS capacity, which can be reclaimed by AWS anytime. In such a scenario, if you assign only one instance type to your node group, that instance type can become unavailable anytime, and you will experience massive downtime and interruption in your cluster. So, to solve the issue and reduce the possible unavailability situation, we assign as many instance types as possible but keep in mind all instance types must have the same amount of CPU and Memory to avoid Cluster Autoscaler scaling issues.

To find the available instance types for x86_64 architecture:

aws ec2 describe-instance-types \

--filters "Name=current-generation,Values=true" \

"Name=vcpu-info.default-vcpus,Values=2" \

"Name=memory-info.size-in-mib,Values=4096" \

--output json | jq '.InstanceTypes[] | select(.SupportedUsageClasses | index("spot")) | select(.ProcessorInfo.SupportedArchitectures | index("x86_64")) | .InstanceType' -r | sortTo find the available instance types for ARM64 architecture:

aws ec2 describe-instance-types \

--filters "Name=current-generation,Values=true" \

"Name=vcpu-info.default-vcpus,Values=2" \

"Name=memory-info.size-in-mib,Values=4096" \

--output json | jq '.InstanceTypes[] | select(.SupportedUsageClasses | index("spot")) | select(.ProcessorInfo.SupportedArchitectures | index("arm64")) | .InstanceType' -r | sortStep 3 – Create Spot Instance worker nodes:

To create a node group to deploy worker nodes using the Spot capacity type, run the following command. Note that select as many instance types as possible.

aws eks create-nodegroup \

--cluster-name kubedemy \

--nodegroup-name application-spot-managed-workers-001 \

--scaling-config minSize=2,maxSize=5,desiredSize=2 \

--subnets subnet-0ff015478090c2174 subnet-01b107cea804fdff1 subnet-09b7d720aca170608 \

--node-role arn:aws:iam::231144931069:role/Kubedemy_EKS_Managed_Nodegroup_Role \

--remote-access ec2SshKey=kubedemy \

--instance-types t2.medium t3.medium t3a.medium c5.large c5a.large c5d.large c6a.large c6i.large c6in.large \

--ami-type AL2_x86_64 \

--capacity-type SPOT \

--update-config maxUnavailable=1 \

--labels node.kubernetes.io/scope=application \

--tags owner=kubedemyStep 4 – Investigate Sport Requests:

By requesting EKS to deploy worker nodes using the Spot capacity type, EKS creates spot requests for the available capacity to be assigned. EKS spot requests are created using one-time persistence policy, which means by each interruption, a new request will be created. You can see Spot requests in the AWS EC2 dashboard.

Spot instance best practices:

- Select as many instance types as possible when creating node groups.

- Select instance types with the same amount of CPU and Memory.

- Use spot nodes only for stateless applications resilient to sudden interruptions.

- Do not use spot instances for critical applications and cluster addons.

- Do not use spot workers for Stateful applications as they use storage “mostly EBS”, and if instance types become unavailable on the AZ in which that EBS got created, you can’t mount it in any other available AZs, and the application will go out of reach.

- Add taints on spot nodes to prevent critical applications from being scheduled on spot workers, and add proper nodeAffinity for addons to deselect spot instances.

- Spot capacity is perfect for running Kubernetes Jobs, Argo workflows, AI/ML workflows, processing queues, stateless API endpoints, Big data ETL, etc.

Conclusion:

Running EKS workers using Spot instances can really reduce your costs, but you should be aware of the possible risks and follow the best practices mentioned above.

If you like this series of articles, please share them and write your thoughts as comments here. Your feedback encourages me to complete this massively planned program. Just share them and provide feedback. I’ll make you an AWS EKS black belt.

Follow my LinkedIn https://www.linkedin.com/in/ssbostan

Follow Kubedemy LinkedIn https://www.linkedin.com/company/kubedemy

Follow Kubedemy Telegram https://telegram.me/kubedemy